Home >

GenAI Admin Portal

Azure OpenAI/ChatGPT Conversational Service with Copilot Studio

Intumit GenAI Admin Portal integrates LLMs and Copilot features to create cutting-edge artificial intelligence products based on NLU/NLP.

Utilizing Azure OpenAI/ChatGPT models, our customers can leverage the latest Copilot and NLP technology to automatically generate training data, detect intents, and generate initial commands to streamline the conversation building process, reducing time and manpower requirements.

GenAI Admin Portal uses less training data and provides higher accuracy as we aim to deliver superior conversational services, and advanced generative AI and Copilot services that support multiple languages, enabling multinational brands/companies to create chatbot solutions at lower costs. We also support Agentic AI (Multi-model AI Agents) are for tailored solutions for clients.

In order to improve customer and employee experiences, Intumit prioritizes enhancing the predictiveness, applicability, and versatility for its Copilot features during development, and fine-tune them to provide better customer experience.

Intumit GenAI Admin Portal has the ability to create a personalized Copilot feature that fits best for enterprise needs. Such examples can be seen below, such as contract comparison, meeting note summary, fraud/scam detection, and more.

Semantic Search, Automatic Documentation, Voice Interaction, and multilingual support are the most used modules.

Transform static FAQs into a specialized structure using key domain terms with machine learning.

The user poses multiple questions in a single inquiry, and the chatbot can retrieve multiple matching answers from the FAQ knowledge base. It then utilizes LLM to blend and generate a response that meets the customer’s expectations, effortlessly handling various challenging and complex queries.

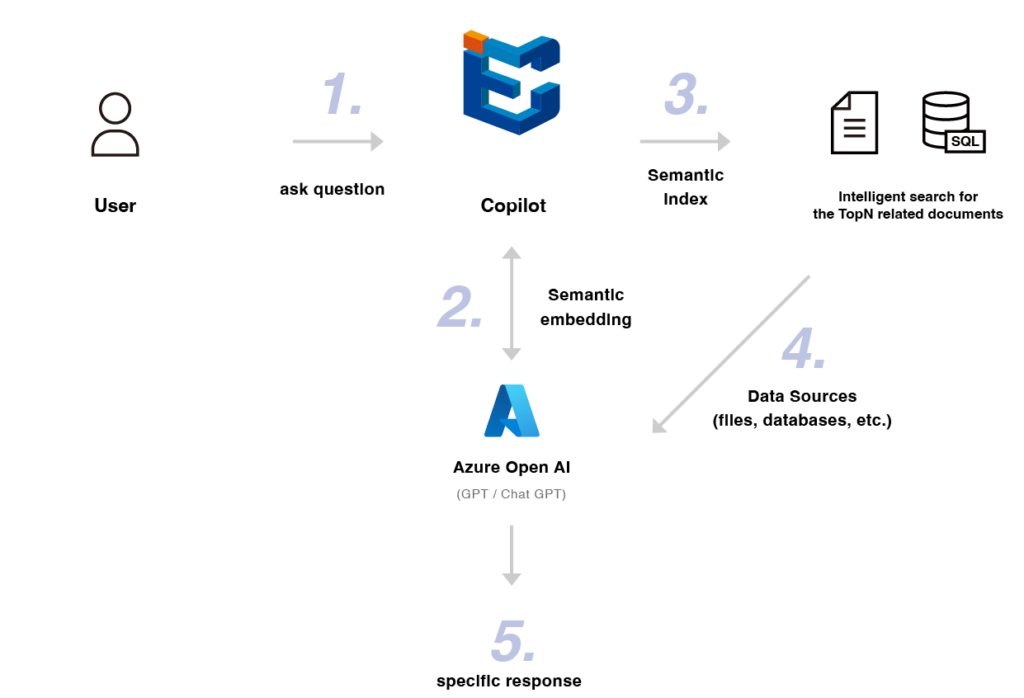

Using Azure OpenAI integrated large language model (LLM) to generate answers by processing uploaded documents and user queries. It helps users answer questions from unstructured text documents without the need to create individual FAQs and train them.

Using Azure OpenAI integrated large language model (LLM), users can generate reference answer content by providing only the question, with settings for tone, role, and direction of the response.

Upload STT, LINE CALL voice files, or other voice system files to the platform for voice content parsing, and generate key summaries or Q&A based on the parsed content.

Optimize answer content for tone, different language variations, response methods, and more.

Build chatbots quickly with fewer training resources

Integrate traditional flyers, reports, registrations, and email notifications into a single channel

Execute extensive and complex tasks through AI-powered conversations

Handle multiple languages without the need for translation personnel

Learn complex structural logic like a human

Design marketing and shopping guide processes

Shorten service development time

Reduce expenditure costs

Obtain more precise answers

Agentic AI (AI Agents) for taoilered service and tasks

GenAI Admin Portal – Azure AI/ChatGPT Conversational Service